AI Exchange @ UVA [2026.8]

Our mission is simple: to keep the UVA community informed, engaged, and inspired as we navigate this transformation together.

Teaching Spotlight: Rethinking Teaching and Learning in an AI Era

AI in Education: From Classrooms to Communities: In this edition, Peter Youngs and Catherine P. Bradshaw share how artificial intelligence is reshaping teaching, teacher development, and student well-being. Their conversation explores AI-powered coaching for educators, the use of machine learning in behavioral and mental health interventions, and how universities can responsibly adapt curriculum, research, and policy to support learners in a rapidly changing digital landscape.

🔑 Key Insights

Teaching Is Being Redefined

Peter Youngs explained that as AI expands student access to information, teachers must focus more on critical thinking, problem-solving, and helping students evaluate competing perspectives rather than simply delivering content.

AI Is Becoming Educational Infrastructure

Through faculty work groups and research initiatives, AI is being integrated into curriculum redesign, teacher coaching, and grant development, with examples ranging from multimodal classroom analysis to rapid-response research teams led by Catherine Bradshaw.

Responsible Use Is Essential

Bradshaw emphasized that while AI holds promise for education and mental health support, students are already turning to tools not designed by specialists, making research-first implementation, media literacy, and policy guardrails critical.

AI & Education Work Group

Peter Youngs and Catherine Bradshaw co-lead an open, cross-disciplinary work group that brings together faculty and students to rethink courses, develop new programs, share research in progress, and quickly form teams for AI-focused grant opportunities.

👉 Main idea: AI is transforming teaching, learning, and student support, requiring educators and institutions to adapt thoughtfully as the technology evolves.

“A Semantic and Motion-Aware Spatiotemporal Transformer Network for Action Detection”

This paper co-authored by Peter Youngs presents a novel spatiotemporal transformer network that introduces several original components to detect actions in untrimmed videos.

AI Research: AI boosts individual scientists—but makes science more crowded and less adventurous

Hao, Q., Xu, F., Li, Y., & Evans, J. (2026). Artificial intelligence tools expand scientists’ impact but contract science’s focus.

(This paper basically formalizes your “lonely crowds” intuition—with hard numbers behind it.)

💡 The Big Idea

AI adoption in the natural sciences creates a tension: it supercharges individual careers and paper impact, but it narrows the collective exploration of ideas. The authors argue that AI pushes effort toward data-rich, “well-lit” problems, producing faster incremental progress—while leaving more speculative or data-poor frontiers behind.

🧪 How they did it

Analyzed 41.3M natural-science papers (1980–2025) from OpenAlex (validated with Web of Science), across biology, medicine, chemistry, physics, materials science, and geology.

Used a fine-tuned BERT model to identify “AI-augmented” papers from titles/abstracts (expert-validated F1 = 0.875).

Compared outcomes for AI vs non-AI papers/researchers: productivity, citations, career transitions (junior → project leader), topic breadth, and citation-network structure.

Measured “knowledge extent” by embedding papers into a scientific vector space (SPECTER 2.0) and estimating how much topical territory a set of papers covers.

📈 Key findings

AI-using scientists publish 3.02× more papers and receive 4.84× more citations than non-adopters.

Junior researchers who adopt AI become leaders 1.37 years earlier (expected 7.33 vs 8.70 years to transition).

AI-augmented work is associated with smaller teams (about 1.33 fewer scientists on average), driven mostly by fewer junior coauthors (from 2.89 → 1.99).

Collectively, AI adoption shrinks topical breadth: median “knowledge extent” is 4.63% lower for AI research, and topic distribution is more concentrated (lower entropy).

Follow-on science becomes less interconnected: AI research shows 22% less “follow-on engagement” among papers citing the same prior work—more star-shaped “hub” attention than a dense, interacting research conversation.

Attention also concentrates into “superstar” papers: 22.20% of top AI papers get 80% of citations; 54.14% get 95%. Citation inequality is higher (Gini 0.754 vs 0.690 for non-AI).

⚖️ Why it matters

If the incentives reward AI adoption (more papers, more citations, earlier leadership), the system may unintentionally steer talent toward optimizing what’s measurable rather than discovering what’s unknown.

A narrower search pattern risks science getting stuck on “local maxima”: lots of progress on today’s benchmarks, less exploration of alternative questions and methods.

Reduced engagement plus more overlap can mean wasted effort—your “everyone climbing the same popular peaks” dynamic—without the usual cross-citation that stitches a new field together.

For policy and funding: the authors flag a likely “left-behind” zone—important questions with limited data (e.g., origins/foundational phenomena), where AI tools currently have less leverage.

Preview: State of AI Research Report

🚀 State of AI Research Report: Launching Feb. 19th

2020-2025 Research Analysis

An analysis of all papers with AI as a topic from arxiv.org suggested that the “Scientific Migration” has triggered a pivot.

Half of 2023’s first-time LLM paper authors came from outside NLP and traditional AI fields. Physicists, economists, biologists—everyone is getting in.

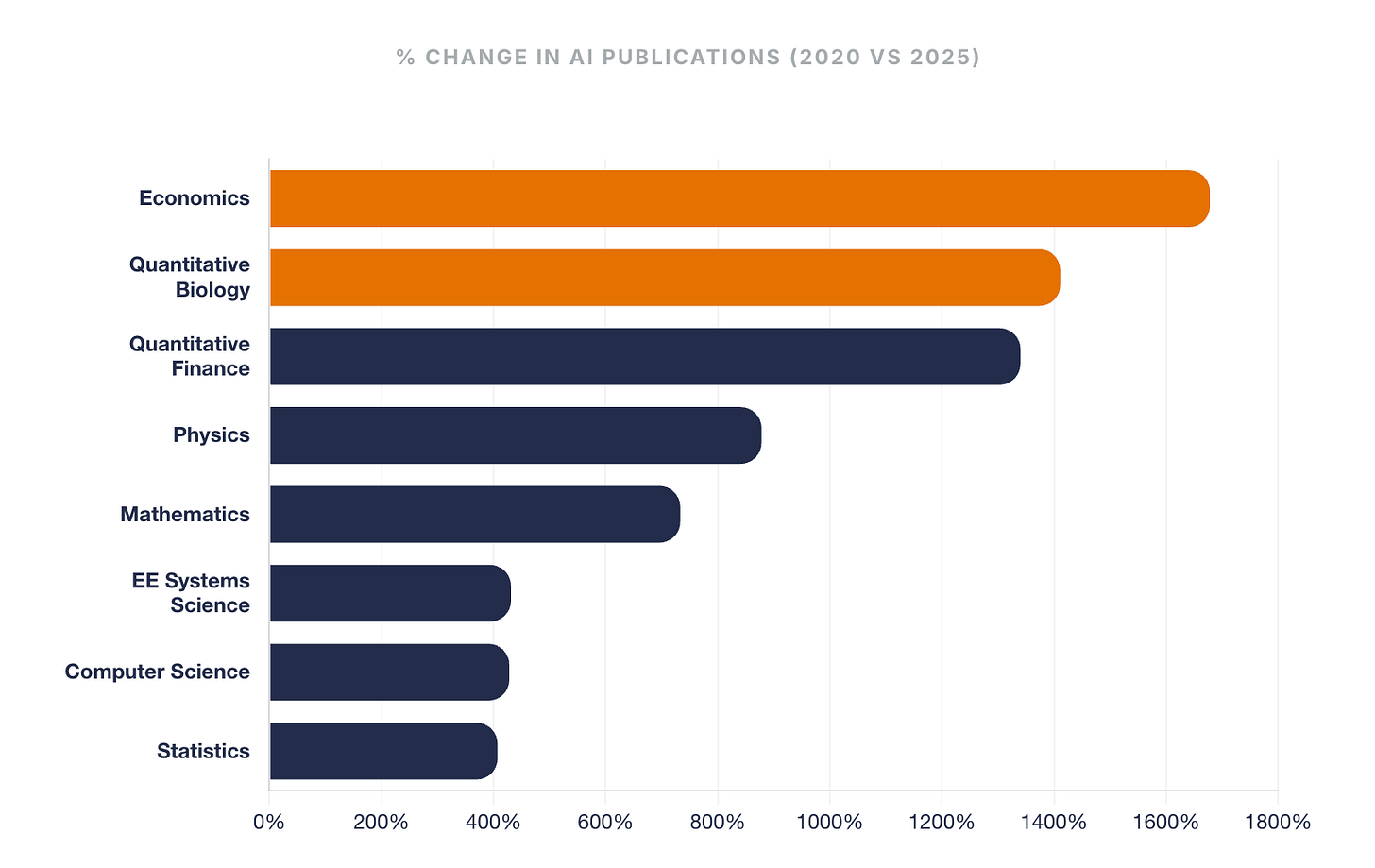

Here is the % increase in AI-related research volume since 2020:

🔹 Economics: +1,678%

🔹 Quantitative Biology: +1,411%

🔹 Quantitative Finance: +1,340%

🔹 Physics: +878%

🔹 Mathematics: +733%

🔹 EE Systems Science: +431%

🔹 Computer Science: +428%

🔹 Statistics: +407%

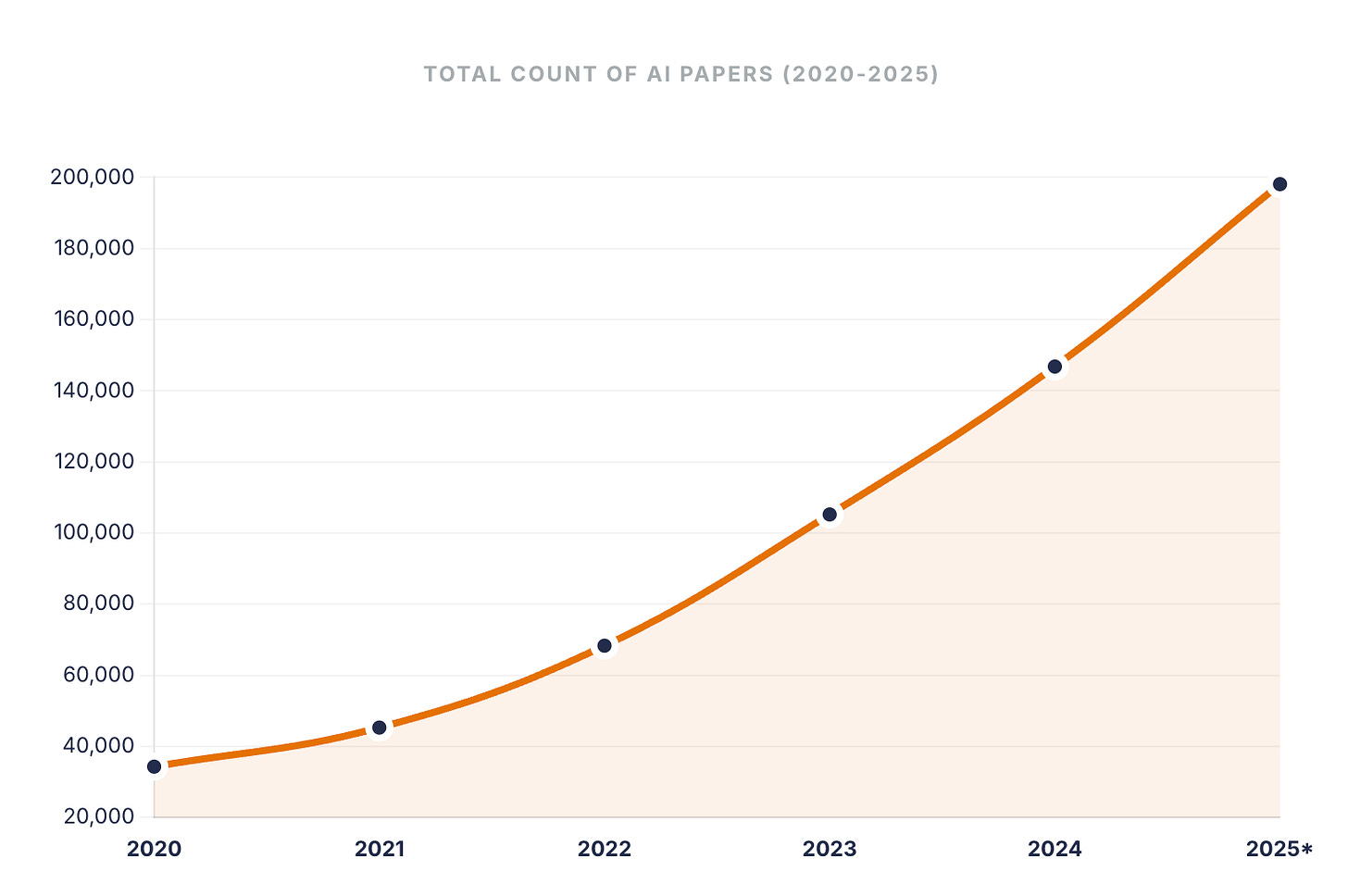

📈 The Volume Surge

Prior to 2022, AI research on arXiv followed a steady growth curve. Following model breakthroughs in late 2022, we are seeing an exponential scaling that covers all eight primary disciplines.

💡 The Big Idea

We’ve moved past the phase of “experimentation.” Fields like Economics and Quantitative Biology are scaling AI-led research at over 3x the relative velocity of core CS.

How is your industry adapting to this acceleration?

AI by the Numbers

The Agentic Economy: First Empirical Evidence

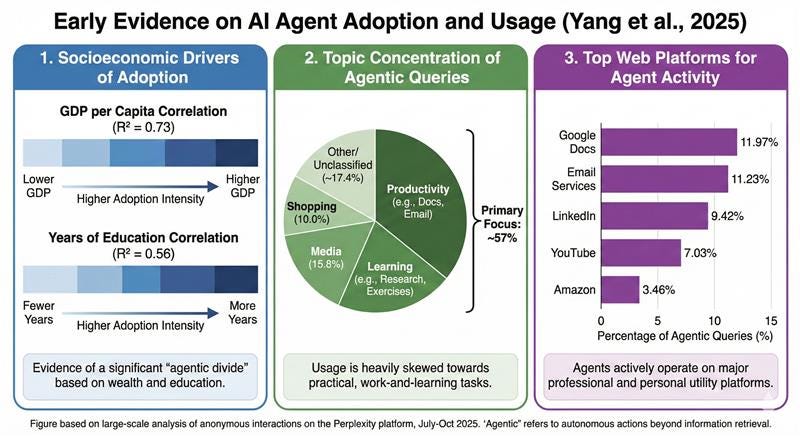

Yang et al. (2025) provide the first large-scale field study of autonomous AI agents, analyzing millions of interactions with Perplexity’s “Comet” browser agent.

The “Agentic Divide”: Adoption is not democratized; it tracks closely with socioeconomic status. Usage intensity correlates strongly with GDP per capita ($r=0.85$) and education levels ($r=0.75$). The user base is dominated by knowledge-intensive sectors like Digital Technology (28% of adopters), Finance, and Academia.

Mundane Utility over Autonomy: Contrary to visions of complex autonomous decision-making, 57% of all agent activity is concentrated in Productivity (36%) and Learning & Research (21%).

The Workflow Stack: Agents are primarily deployed to manage administrative and preparatory labor. The top environments accessed are Google Docs (12%), Email Services (11%), and LinkedIn (9%).

Top Tasks: Activity is highly concentrated; the top 10 tasks (out of 90) account for 55% of all queries, led by “assisting with exercises,” “summarizing research,” and “creating/editing documents”

Conclusion: Early data suggests general-purpose agents currently function as “productivity exoskeletons” for the global knowledge elite, reinforcing existing digital labor hierarchies rather than disrupting them.

Around UVA: Library Sprints — Collaborations for Research and Teaching

The University of Virginia Library invites faculty and staff to apply for Library Sprints, an intensive three to five-day program supporting research and teaching that pairs participants with teams of expert librarians, technologists, and other information specialists to advance a defined research project or pedagogical challenge. Library Sprints are designed to remove distractions, concentrate expertise, and generate tangible outcomes in a short, focused period of time.

Apply by March 2nd!

Upcoming AI Events @ UVA

Virginia AI Symposium: Advancing Teaching and Learning in the Age of Generative AI

January 23rd, 2026 (10-4) — TOMORROW!

University of Virginia: Shumway Hall

Only registration for the virtual track remains open!

This event is organized by UVA’s Center for Teaching Excellence, UVA’s McIntire School of Commerce, George Mason’s Stearns Center for Teaching and Learning, and JMU’s Center for Faculty Innovation, funded by the FFEI grant administered by the State Council of Higher Education for Virginia (SCHEV).

*When you register, proceed to the Log In button (top-right corner) and Register for a New Account.

Anyone with questions should reach out to Breana Bayraktar: bbayrakt@gmu.edu

2026 Fellowships in AI Research (FAIR) Symposium

🗓 January 30, 2026 | Forum Hotel

Join us at The Forum Hotel for the Fellowships in AI Research (FAIR) Research Symposium. Hear from current fellows as they share their latest research and be among the first to meet the 2026 cohort.

Knowledge Continuum — The State of AI in the Commonwealth

🗓 February 07, 2026 | 4:30 PM–7:00 PM | Rossyln, VA

Join us on Feb. 7 at Sands Family Grounds in Rosslyn, VA, for the winter edition of the Knowledge ∞ Continuum, a technology-led conversation series from the Center for the Management of IT (CMIT), designed to connect research with real-world practice.

This program will feature the launch of the State of AI in the Commonwealth report, co-sponsored by the Center for the Management of IT and AI Research @ UVA.

The Future With AI: Policies, Ethics, and Governance

🗓 February 10, 2026 | 1:00 PM–2:00 PM | Virtual

Artificial Intelligence is no longer a distant concept—it’s a prevailing force shaping nearly every aspect of our lives. Join UVA experts Renée Cummings, School of Data Science, and Mona Sloane, School of Data Science & Media Studies for a thought-provoking conversation on the policies, ethics, and governance shaping AI’s future. From cutting-edge research to innovation, this session explores how we can guide AI responsibly.

The Automated Scholar: Research in the Age of Powerful AI Agents

🗓 February 17, 2026 | 11:00 AM–12:00 PM | Shannon Library

In this session, join Anton Korinek for the following talk:

Building AI systems that can pursue novel research turns out to look a lot like raising graduate students: the hard part is judgement, not knowledge. As AI systems become capable of autonomously researching the literature, advancing hypotheses, analyzing data, and drafting research outputs, we face the challenge of instilling not just facts and techniques but the expert intuition about what questions matter, what methods are appropriate, and what constitutes a genuine contribution. Drawing on ongoing work developing specialized AI systems for research, this talk explores how to build domain expertise into AI systems and the meta challenges we face: how to verify what AI produces, who bears responsibility for its conclusions, and what role remains for human expertise.

Exploring the Value Chain of Ethical AI: Dive into Data Centers

🗓 February 17, 2026 | 1:00 PM–7:00 PM | Darden School

Hosted by the LaCross AI Institute, industry experts discuss data centers as critical AI infrastructure—examining rapid growth, career opportunities, and societal challenges. We will discuss the current historic growth, the professional opportunities that exist in this dynamic sector, and the business and societal challenges that must be addressed. Open to the entire UVA community.

HooHacks 2026

🗓 March 23 - 24, 2026| 24-hour hackathon

Virginia's largest hackathon and one of the top 50 collegiate hackathons nationally. Teams compete across categories including AI/ML applications, with $10,000+ in prizes and workshops from sponsors like Google Cloud, Intel, and Capital One (Open to students 18+).

Coming Soon: Faculty Voices

AI and the Environment: Seeing Impact From Both Sides. Xi Yang, an Associate Professor in the University of Virginia’s Department of Environmental Sciences, and Lauren Bridges, an Assistant Professor in UVA’s Department of Media Studies, explore how artificial intelligence intersects with the environment. From revealing hidden climate damage in coastal forests to examining the environmental and community impacts of data centers, their work highlights both the promise and the costs of these technologies. Together, they offer perspectives on what responsible AI research looks like as environmental challenges continue to grow.

UVA AI Resources

AI for Academic Excellence - Student Toolkit: A comprehensive guide for students on the best uses of AI.

AI Agents in Economic Research (Anton Korinek): A guide for researchers on the use of AI agents.

UVA Claude Builders Student Club: A 250+ strong group for those interested in development via Claude.

UVa AIML Seminar - Seminar featuring artificial intelligence, machine learning, and their applications.

UVA Podcasts We Listen to

Co-Opting AI: Public Conversations About Artificial Intelligence and Society:

Prof. Mona Sloane’s series Co-Opting AI is a virtual public speaker series that interrogates and demystifies AI.UVA Data Points: Podcast from the School of Data Science.

HOOS in STEM: From Prof. Ken Ono this series showcases the marvelous cornucopia of STEM at UVA, from the latest innovations to growth inside and outside the classroom.

Thanks for reading AI @ UVA Substack! Subscribe for new posts and podcasts.